|

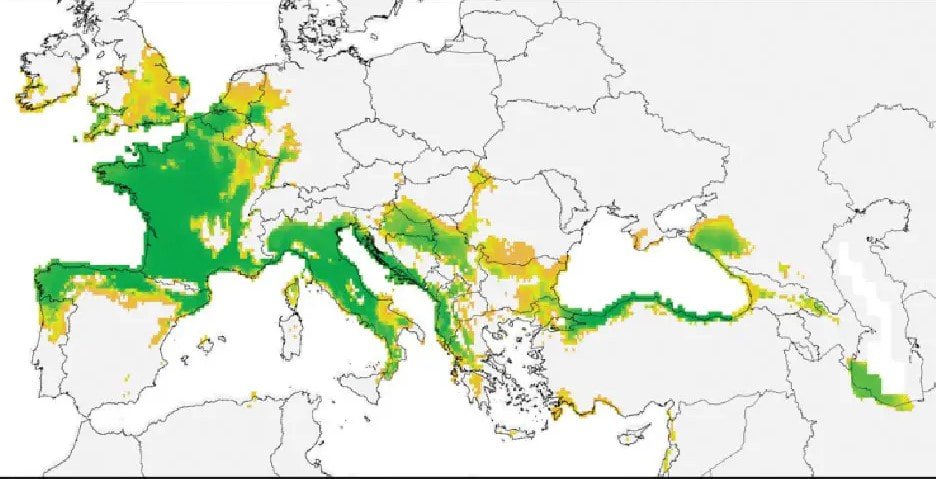

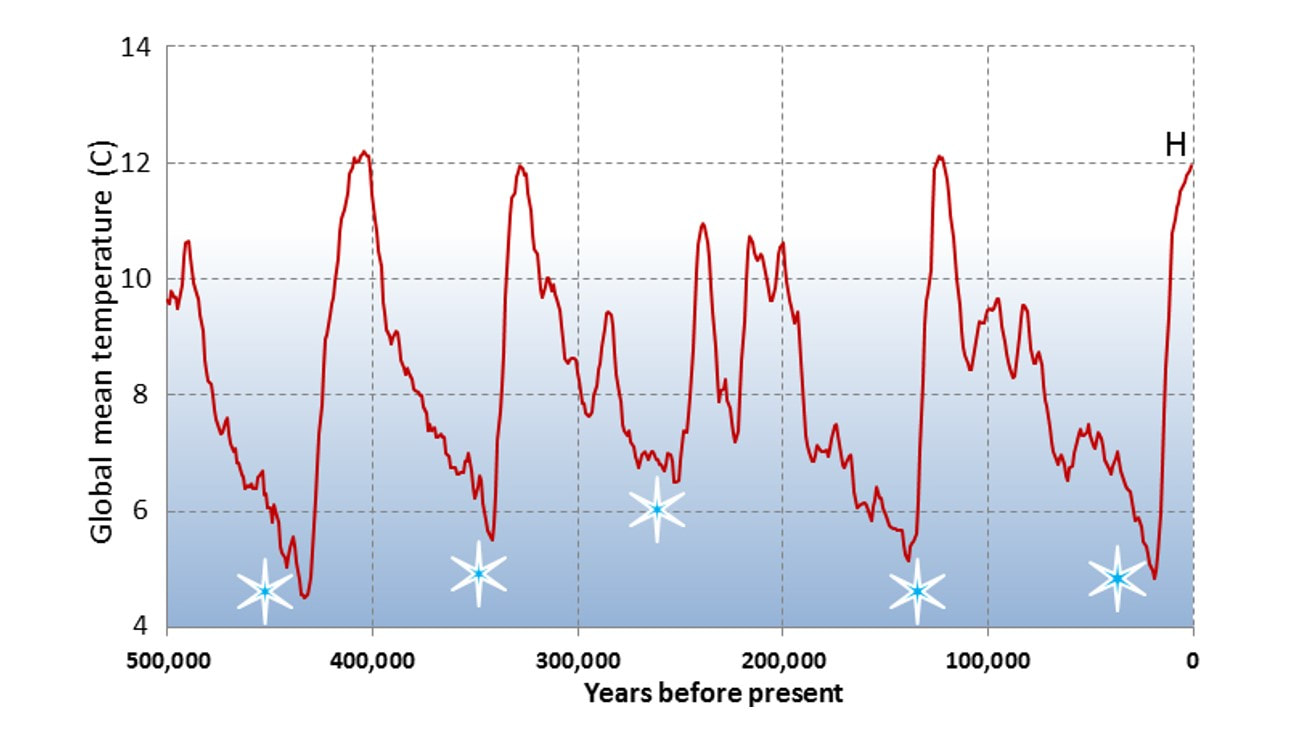

John writes … Introduction. Earlier in the month, I was interested to note that both Nature, the UK’s leading science research journal and New Scientist, the UK’s leading popular science magazine, drew attention to an article about invasive species that had appeared in The New York Times (1). What do we mean by invasion? To start to answer this question I go back to the early and middle years of the 20th century and tell the story of three species of bird. The first is the Collared Dove. This originated in India but over several millennia had slowly spread west and became well established in Turkey and SE Europe. Then, in the early 1930s it started to spread north and west, reaching The Netherlands in 1947 and first breeding in Britain (in Norfolk) in 1956. From there it went on to rapidly colonise the whole country. By 1976 it was breeding in every county in the UK, including the Outer Hebrides and Shetland and is now a familiar inhabitant of parks and gardens.  Fulmar. Fulmar. The second bird is a sea bird, the Fulmar, which breeds on maritime cliffs. It is now widely distributed in the north Atlantic and sub-arctic regions but until the late 19th century, its only breeding colonies were in Iceland and on St Kilda, a remote archipelago of small islands 64 km west of the Outer Hebrides. However, in the 1870s, their range started to expand outwards from St Kilda and from Iceland. Fulmars first bred in Shetland in 1878 and then slowly moved south via Orkney, down both the east and west coasts of Britain. They first bred at Bempton in Yorkshire in 1922 and at Weybourne in north Norfolk in 1947. The range extension included Ireland and Norway in Europe and westwards to Greenland and Canada. In Britain, the west coast and east coast expansions completed the circling of the British mainland by meeting on the south coast in the late 1970s. Since then, southern expansion has continued into northern France.  Little Egret. Little Egret. The third species is the Little Egret, a marshland bird in the Heron family. Until the 1950s, this was regarded as a species of southern Europe and north Africa which was very occasionally seen in Britain. However, it then began to extend its range north, breeding in southern Brittany for the first time in 1960 and in Normandy in 1993. By this time, it was seen more and more frequently along the coasts of southern Britain, especially in autumn (2) and the first recorded breeding was in Dorset in 1996 (and in Ireland in 1997). Little Egrets now breed all over lowland Britain and Ireland and disperse further north in autumn and winter. These three birds give us clear pictures of ecological invasions. They have extended their range, become established in new areas and are now ‘part of the scenery’. In these cases, the invasions have not been harmful or detrimental to the newly occupied areas nor have any species already there been harmed or displaced. The invaders have been able to exploit previously under-exploited ecological niches and in doing so have increased the level of biodiversity in their new territories. The ‘balance of nature’ is thus a dynamic balance. Increases and reductions in the areas occupied by species have occurred and continue to do so. Such changes are usually the result of changes in the physical environment or of changes in the biological environment that result from physical changes. At one end of the scale there have been very large physical changes. Consider for example the series of glacial and inter-glacial periods that have been occurring over the past 2.6 million years during the Quaternary Ice Age. The last glacial period ended ‘only’ about 11,700 years ago and during the current inter-glacial period there have been several less dramatic and more localised fluctuations in climate which had some effect on the distributions of living organisms (although obviously not as dramatic as those shifts resulting from alternating glacial and inter-glacial periods). Having said all this, I need to add that there is no consensus about the reasons for the three dramatic extensions to breeding range that I described above. However, some more recent and currently less dramatic changes in the ranges and/or in migratory behaviour of some bird species are thought to be responses to climate change.  Rabbit. Rabbit. Introductions and invasions. The natural invasions described above contrast markedly with the situation described in the New York Times feature: the author writes that ‘over the last few centuries’, humans have deliberately or accidentally introduced 37,000 species to areas outside their natural ranges. Nearly 10% of these are considered harmful and it is these harmful introduced species that are termed ‘invasive’. But actually, human-caused animal introductions go back further than the last few centuries. For example, rabbits were introduced to Britain by the Romans, probably from Spain and there is evidence of their being used both as ‘ornamental’ pets and as food. It is not clear when the species became established in the wild in Britain although the best-supported view is that this started to occur in the 13th century. What is clear is that rabbits are now found throughout Britain, with the rather curious exceptions of Rùm in the Inner Hebrides and the Isles of Scilly. The introduction of rabbits to Britain is probably representative of a slow trickle of introductions as human populations moved across Europe but the rate surely started to increase as the world was explored, colonised and exploited by various European nations. The rate increased still further with, for example, the widespread collection of exotic plants and animals which gathered pace in the 18th and 19th centuries. And alongside the carefully garnered specimens for zoo or garden, there were doubtless species that in one way or another came along for the ride, just as in previous centuries, European explorers and colonisers accidentally took rats into lands where they had never previously occurred. Further, as international trade and travel has increased since Victorian times – and indeed is still increasing – so the possibility of introductions has also increased and is still increasing (current rate is about 200 per year). Even so, the number of 37,000, quoted above, seems difficult to comprehend, although I do not any way doubt the reliability of the data (provided by the Intergovernmental Science-Policy Platform on Biodiversity and Ecosystem Services, IPBES). Further, as mentioned above, nearly 10% of these introduced species cause harm of some sort in their new environment. This may be harm to nature because of negative interactions with native species or because of damage to the environment; it may be damage to agriculture or other aspects of food systems or it may be harm to human health. Taken together, it is estimated that these harmful introductions ‘are causing more than $423 billion in estimated losses to the global economy every year’ (3). Some examples of harmful introductions. It is clearly not possible to discuss all 3500+ species whose introductions have been harmful so I am going to present a selection, mainly relevant to Britain, that give examples of different levels and types of harm. Plants. Rhododendron. There are several species of rhododendron but only one, Rhododendron ponticum (Common Rhododendron) is considered invasive. Its native distribution is bi-modal with one population around the Black Sea and the other on the Iberian peninsula. These are actually fragments of its much wider distribution, including the British Isles, prior to the last glaciation. It has thus failed to re-colonise its former range after the retreat of the ice and our post-glacial eco-systems have developed without it. In the 1760s it was deliberately introduced to Britain and in the late 18th and early 19th centuries was widely sold by the growing nursery trade both as a hardy ornamental shrub and as cover for game birds. However, it is now regarded as an invasive species: it has covered wide areas in the western highlands of Scotland, parts of Wales and in heathlands of southern England. In all these regions, rhododendrons can quickly and effectively blanket wide areas, to the exclusion of most other plant species and to the detriment of general biodiversity. Winter heliotrope.  Winter Heliotrope. Winter Heliotrope. This plant is also listed as an invasive non-native species but its invasiveness is more localised than that of rhododendron. Its native range is the Mediterranean region. It was introduced into Britain as an ornamental garden plant in 1806 and was valued for its winter flowering (December to March) and the fragrance of the flowers. Only male plants were introduced and thus the species cannot produce seeds in this country. However, it spreads via vigorous underground rhizomes and can grow from fragments of those rhizomes. Nevertheless, it is not clear how this species became established in the wild, first recorded in Middlesex in 1835: were some unwanted plants/rhizome fragments thrown out? Once established, it quickly covers the ground with its large leaves smothering or shading out nearly all other species. In the two locations that I know where it is growing in the wild, the area covered is increasing year by year. Interestingly, at least one national park authority has a specific policy to control and/or exterminate winter heliotrope while the Royal Horticultural Society has published advice about controlling it when it is grown as a garden plant. Himalayan Balsam (also known in some cultures as Kiss-me-on-the mountain).  Himalayan Balsam. Himalayan Balsam. As the name implies this plant originates from the Himalayan region of Asia. It was introduced to Britain in 1839 by Dr John Forbes Royle, Professor of Medicine at Kings College, London (who also introduced giant hogweed and Japanese knotweed!). The balsam was promoted as an easy-to-cultivate garden plant with attractive and very fragrant flowers. In common with other members of the balsam family, including ‘Busy Lizzie’, widely grown as a summer bedding plant, Himalayan Balsam has explosive seed pods which can scatter seeds several metres from the parent plant. It is no surprise then that the plant became established in the wild and by 1850 was already spreading along river-banks in several parts of England. Its vigorous growth out-competes other plants while its heady scent and abundant nectar production may be so attractive to pollinators that other species are ignored. Further, the explosive seed distribution means that spread has been quite rapid. It now occurs across most of the UK and in addition to its out-competing other species, it is also regarded as an agent of river-bank erosion. Invertebrate animals. Asian Hornet. This species has been very much in the news this and last year. The main concern is that it is a serious predator of bees, including honey bees, so serious in fact that relatively small numbers of hornets can make serious inroads into the population of an apiary, even wiping it out completely in the worst cases. So why would anyone want to introduce this species? Answer: they wouldn’t. Asian hornets arrived in France by accident in 2004, in boxes of pottery imported from China. From there it has spread to all the countries that have a border with France. Indeed, as the map at the head of this article shows, ideal conditions exist for the Asian hornet across much of Europe. It was first noted in Britain in 2016 and since then there have been 58 sightings, nearly all in southern England (4); 53 of the sightings have included nests, all of which have been destroyed. The highest number of sightings has been in this year, with 35 up to the first week of September. By contrast, there is no evidence that Asian hornets are established in Ireland. Box-tree moth.  Box-Tree Caterpillar and Moth. Box-Tree Caterpillar and Moth. Those who follow me on Facebook or X (formerly Twitter) will know of my encounters with the Box-tree Moth. There has been a major outbreak this year in the Exeter area, with box hedges and bushes being almost completely defoliated by the moth’s caterpillars. At the time of writing, the adults are the most frequently sighted moth species in our area. Box-tree Moth is native to China, Japan, Korea, India, far-east Russia and Taiwan; there are several natural predators in these regions; these keep the moth in check and so damage to box bushes and trees is also kept in check. However, in Europe there are no natural predators and thus a ‘good’ year for the moth can be a very bad year for box. Looking at the pattern of colonisation in Europe it appears that it has been introduced more than once, almost certainly arriving on box plants imported from its native area, as is proposed for its arrival in Britain in 2007 (5). Like the Asian hornet then, its introduction was accidental but it has left us with a significant ecological problem to deal with.  Cane toad. Credit: Froggydarb, Wikimedia Commons, CC BY-SA 3.0. Cane toad. Credit: Froggydarb, Wikimedia Commons, CC BY-SA 3.0. Vertebrate animals. Cane toad. The cane toad is surely the best-known example of deliberate introductions that have gone very badly wrong. As we discuss this, we need to dispel from our minds any notions of toads based on the familiar common toad. Cane toads are, as toads go, enormous, weighing up to 13 kg; they also secrete toxins so that their skin is poisonous to many animals; the tadpoles are also very toxic. Cane toads are native to Central America and parts of South America and have also been introduced to a several islands in the Caribbean. It is a predator of insect pests that feed on and damage sugar cane. It was this feature that led in 1936 to its introduction into Queensland, Australia where it was hoped that it would offer some degree of protection to the sugar cane crop. However, as one commentator stated, ‘The toads failed at controlling insects, but they turned out to be remarkably successful at reproducing and spreading themselves.’ They have spread from Queensland to other Australian states; they have no predators in Australia (in their native regions, predators have evolved that can deal with the toxins). They eat almost anything which means that they are a threat to several small animal species firstly because they compete for food and secondly because the toads eat those animals themselves. The population of cane toads in Australia is now estimated at 200 million and growing and they are regarded as one of the worst invasive species in the world. Grey Squirrel (also known as the Eastern Grey Squirrel).  Grey squirrel. Grey squirrel. Many of us enjoy seeing grey squirrels; they are cute, they are inventive and clever and they often amuse us. But wait a moment – and aiming now specifically at British readers of this blog – these agile mammals that we like to see are not the native squirrels of the British Isles. The native squirrel is the red squirrel which has, over many parts of the UK, been displaced by its larger, more aggressive relative. Grey squirrels imported from the USA were introduced into the grounds of stately homes and large parks from around 1826 right through to 1929. It was from these introduced populations that the grey squirrel spread into all English counties – although it was not until the mid-1980s that they reached Cumbria and the most distant parts of Cornwall. This is an invasion that has been happening during the lifetime of many of our readers. But what is the problem? The problem is that in expanding its range, the grey squirrel has displaced the native red squirrel which is now confined to the margins of its previous range. I need to say that there are several factors that have contributed to this decline but that the grey squirrel is certainly a major one. The grey is more aggressive than the red which is important in that they often compete for the same foods and the grey also seems to be more fertile. Further, the grey squirrel carries a virus which is lethal to the red squirrel. Thus, overall, the introduction of a species to add interest to a stately home or to a large area of parkland has had a significant effect on ecosystems. Final comment.

As I stated at the beginning, ecosystems are in a state of dynamic balance with some degree of self-regulation. We need to think about this before taking any action that may interfere with that dynamic balance. And also, in view of the ways in which Asian hornet and Box-tree Moths arrived, check incoming packages very carefully! John Bryant Topsham, Devon September 2023 All images are credited to John Bryant unless stated otherwise. (1) Manuela Andrioni, Invasive Species Are Costing the Global Economy Billions, Study Finds, The New York Times, 4 September 2023. (2) On one autumn afternoon in the early 1990s I counted 34 in a flock on the Exe estuary marshes. (3) Data from IPBES: IPBES Invasive Alien Species Assessment: Summary for Policymakers | Zenodo. (4) Asian hornet sightings recorded since 2016 - GOV.UK (www.gov.uk). (5) The Box-Tree Moth Cydalima Perspectalis (2019) (rhs.org.uk).

0 Comments

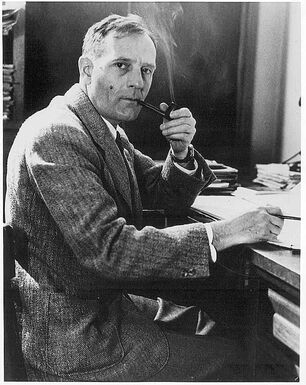

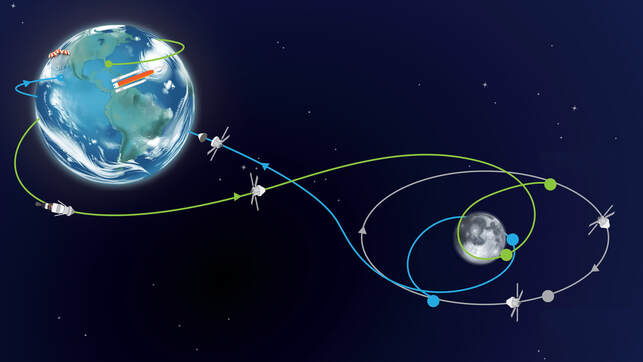

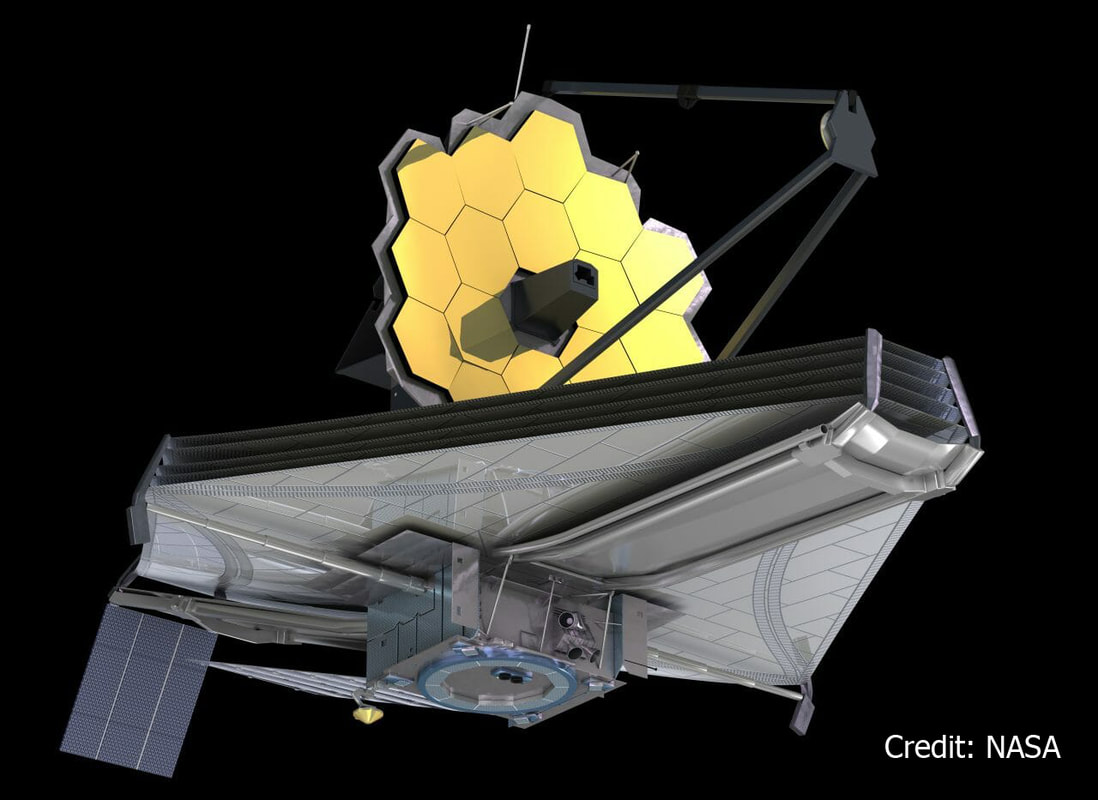

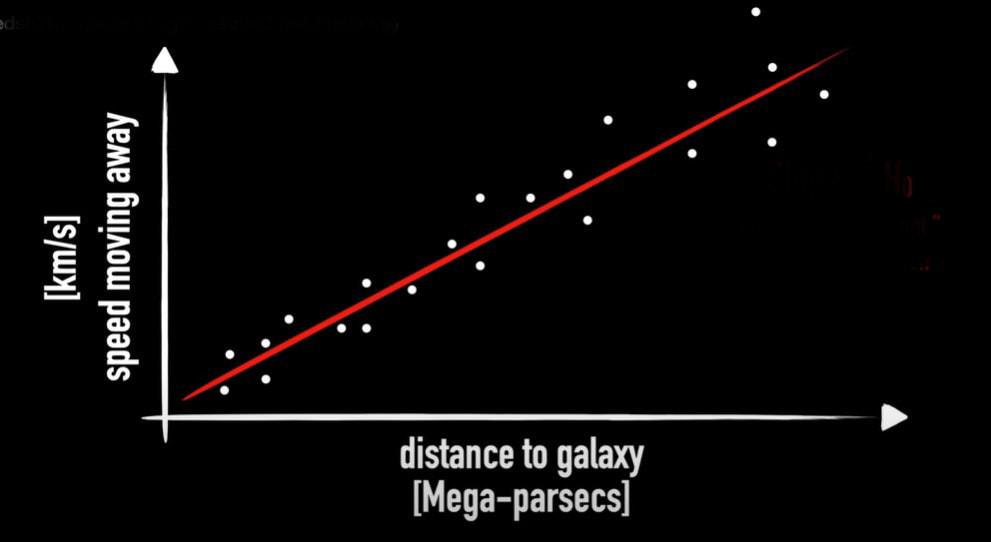

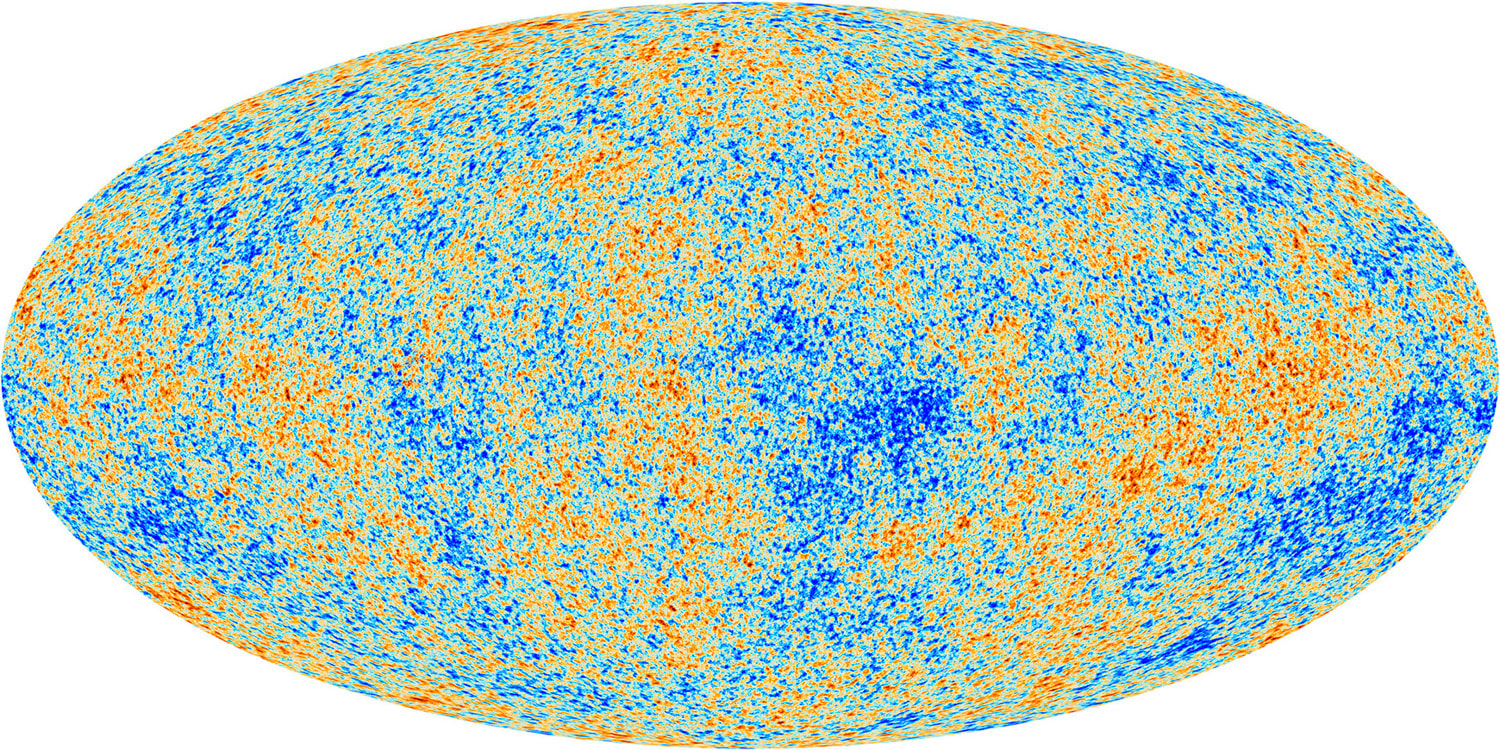

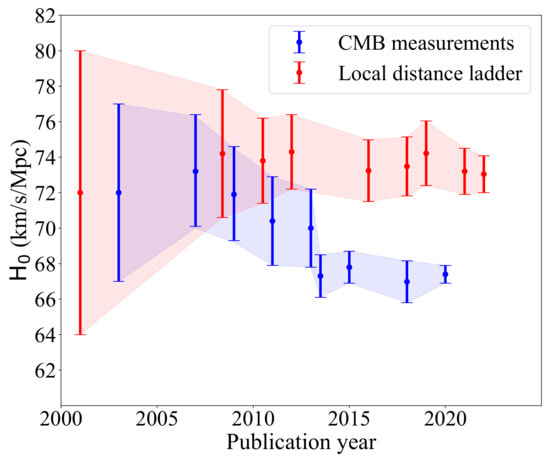

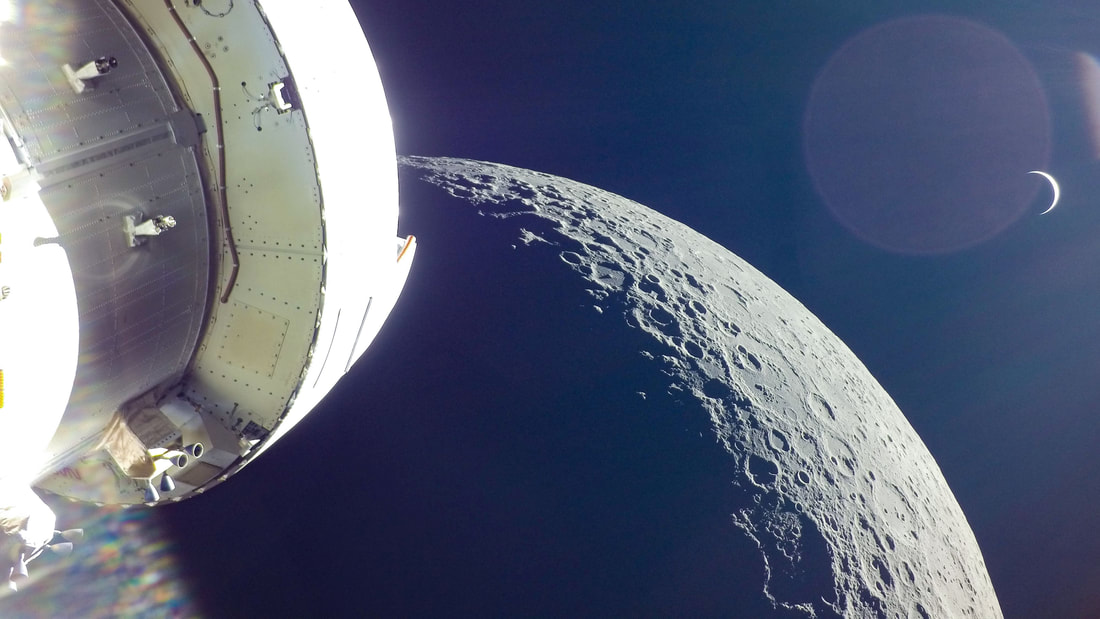

Graham writes ...  Credit: freepik.com Credit: freepik.com Since the James Webb Space Telescope (JWST) started doing the business, there has been a deluge of rather dubious reports on social media about a variety of crises in cosmology. For example, there have been statements that the telescope has proven that the Big Bang didn’t happen, that the Universe is twice as old as we thought it was, that the very early massive galaxies that JWST has observed are physically impossible, and so on! First, let me reassure you that these ‘stories’ are not true. When we look at the details, it’s clear that this amounts to rumour-mongering or ‘false news’! That’s not to say that the status quo – the standard model of cosmology – is sacrosanct. I’m sure that the new space observatory will make observations that will genuinely challenge our current models. This is not a bad thing. It’s just the way that science works. The new instrument provides a means to modify and enhance our current understanding, and hopefully allow us to learn lots of new physics! However, having said all that, there is a genuine ‘crisis’ in cosmology at the moment which demands attention, and this is the topic for this month’s blog post. It concerns the value of an important parameter which describes the expansion of the Universe called Hubble’s constant, which is usually denoted by Ho (H subscript zero). This is named after Edwin Hubble, the astronomer who first experimentally confirmed that the Universe is expanding. The currently accepted value of Ho is approximately 70 km/sec per Megaparsec. As discussed in the book (1) (pp. 57-59), Hubble discovered that distant galaxies were all moving away from us, and the further away they were the faster they were receding. This is convincing evidence that the Universe, as a whole, is expanding (1) (Figure 3.4). To understand the value of Ho above, we need to look at the standard units that are used to express it. I think we are all familiar with km/sec (kilometres per second) as a measure of speed, in this case the speed of recession of a distant galaxy. But what about the Megaparsec (Mpc for short) bit? A parsec (parallax second) is a measure of distance, and the ‘second’ refers to an angle rather than a second of time. If you take a degree (angle) and divide it by 60 you get a minute of arc. If you then divide the minute by 60 you get a second of arc. So a second of arc is a tiny angle, being one 3,600th of a degree. To understand how this relates to astronomical distances we need to think about parallax. There is a simple way to illustrate what this is. If you hold a finger up in front of your eyes, and then look at it alternatively with one eye and then the other, your finger will appear to move its position relative to the background. Furthermore, it will appear to change its position more when your finger is close to your face, than when it is further away. Keeping this simple observation in mind, the same principle of parallax can be applied to measuring the distance to nearby stars. The diagram below illustrates the idea.  Edwin Hubble. Credit: public domain Edwin Hubble. Credit: public domain If you observe the position of a star from opposite sides of the Earth’s orbit around the Sun it will appear to move relative to the background of distant stars. When the parallax angle P (shown in the diagram) takes the value of one second of arc, then trigonometry says that the star is 1 parsec away, which is about 3.26 light years. So, getting back to Hubble’s constant, Ho says that the speed of recession of galaxies increases by 70 km/sec for every Megaparsec they are distant, where a Megaparsec = a million parsecs = 3,260,000 light years. Therefore to determine the current value of Ho, you can observe a number of galaxies to estimate their distance and rate of recession and plot them on a graph as shown below. The slope of the resulting plot will give the value of Ho. Hubble was the first to do this in the 1920s, and his estimate was around 500 km/sec per Megaparsec – some way off, but still a remarkable achievement given the technology available at that time. However, having been somewhat distracted by the units in which Ho is expressed, what is the issue that I introduced in my second paragraph? There are currently two independent ways of measuring the value of Ho. The first of these, sometimes referred to as the ‘local distance ladder’ (LDL) method, is essentially the process we have already described. We establish an observational campaign where we measure the distances and rates of recession of many galaxies, spread across a large range of distances, to estimate the ‘slope of the plotted curve’ as described above.  A Type 1a supernova in M101, 21 million light years distant. Credit: B.J. Fulton/Las Cumbres Observatory Global Telescope Network. A Type 1a supernova in M101, 21 million light years distant. Credit: B.J. Fulton/Las Cumbres Observatory Global Telescope Network. However, this is not as easy as it sounds – measuring huge distances to remote objects in the Universe is problematic. To do this, astronomers rely on something called the ‘local distance ladder’, as mentioned above. The metaphor of a ladder is very apt as the method of determining cosmological distances involves a number of techniques or ‘rungs’. The lower rungs represent methods to determine distances to relatively close objects, and as you climb the ladder the methods are applicable to determining larger and larger distances. The accuracy of each rung is reliant upon the accuracy of the rungs below. For example, the first rung may be parallax (accurate out to distances of 100s of light years), the second rung may be using cepheid variable stars (1) (p. 58) (good for distances of 10s of millions of light years), and so on. The majority of these techniques involve something called ‘standard candles’. These are astronomical bodies or events that have a known absolute brightness, such as cepheid variable stars and Type Ia supernovae (the latter can be used out to a distance of about a billion light years). The idea is that if you know their actual brightness, and you measure their apparent brightness as seen from Earth, you can easily estimate their distance. This summary is a rather simplified account of the LDL method, but hopefully you get the idea.  The ESA Planck space telescope. Credit: ESA. The ESA Planck space telescope. Credit: ESA. The second method to estimate the value of Ho employs a more indirect technique using the measurements of the cosmic microwave background (CMB). As discussed in the book (1) (pp. 60-62) and in the May 2023 blog post, the CMB is a source of radio noise spread uniformly across the sky, that was discovered in the 1960s. At that time, it was soon realised that this was the ‘afterglow’ the Big Bang. Initially this was very high energy, short wavelength radiation in the intense heat of the early Universe, but with the subsequent cosmic expansion, its wavelength has been stretched so that it current resides in the microwave part of the electromagnetic spectrum. The characteristics of this radio noise has been extensive studied by a number of balloon and spacecraft missions, and the most accurate data we have was acquired by the ESA Planck spacecraft, named in honour of the physicist Max Planck who was a pioneer in the development of quantum mechanics. The map of the radiation produced by the Plank spacecraft is shown below. The temperature of the radiation is now very low, about 2.7 K (2), and the variations shown are very small – at the millidegree level (3). The red areas are the slightly warmer regions and the blue slightly cooler. To estimate the value of Ho based on using the CMB data, cosmologists use what they refer to as the Λ-CMD (Lambda-CMD) model of the Universe – this is what I have called ‘the standard model of cosmology’ in the book (1) (pp. 63 – 67, 71 – 76). This model assumes that Einstein’s general relativity is ‘correct’ and that our Universe is homogenous and isotropic (the same everywhere and in all directions) at cosmological scales. It also assumes that our Universe is geometrically flat and that it contains a mysterious entity labelled dark matter that interacts gravitationally, but otherwise weakly, with normal matter (CDM stands for ‘cold dark matter’). It also supposes that there’s another constituent called dark energy (that’s the Λ bit, Λ being Einstein’s cosmological constant (1) (pp. 55, 56)), which maintains a constant energy density as the Universe expands. So, how do we get to a value of Hubble’s constant from all this? We start with the CMB temperature map, which corresponds to an epoch about 380,000 years after the Big Bang. The blue (cooler and higher density) fluctuations represent the structure which will seed, through the action of gravity, the development of the large-scale structure of stars and galaxies that we see today. The idea is that using the CMB data as the initial conditions, the Λ-CMD model is evolved forward using computer simulation to the present epoch. This is done many times while varying various parameters, until the best fit to the Universe we observe today is achieved. This allows us to determine a ‘best fit value’ for H0 which is what we refer to as the CMB value. Now, we get to the crunch – what exactly is the so-called ‘crisis in cosmology’? The issue is illustrated in the diagram below, which charts the value of Ho using the two methods from the year 2000 to the present day from various studies. The points show the estimated value of Ho and the vertical bars show the extent of the ±1σ errors in these values. It can be seen that the two methods were showing reasonable agreement with each other, within the bounds of error, until around 2013. However, thereafter the more accurate estimates have diverged from one another. The statistics say that there is a 1 in 3.5 million chance that this situation is a statistical fluke – in other words there is confidence at the 5σ level that the divergence is real. Approximate current values of Ho using the two methods are: Ho = 73.0 km/sec per Mpc (LDL), Ho = 67.5 km/sec per Mpc (CMB). This is quite a considerable difference, which influences the resulting model of the Universe. For example, mathematicians among my readers will notice that the inverse of Ho has units of time, and in fact this give a rough measure of the age of the Universe. Our best estimate of the age of the Universe currently is around 13.8 billion years, and the approximation, based on the inverse of Ho, for the LDL method is 13.4 billion years, and that for the CMB method 14.5 billion years. So, roughly a billion years difference in the age estimate between the two methods. So, what can we deduce from all this? Well, put succinctly:

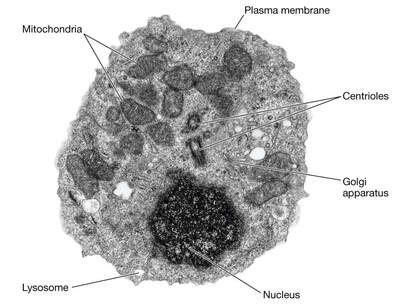

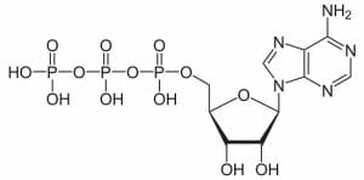

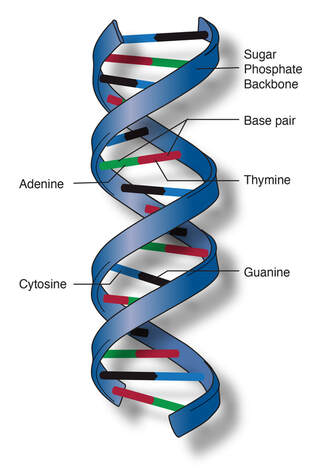

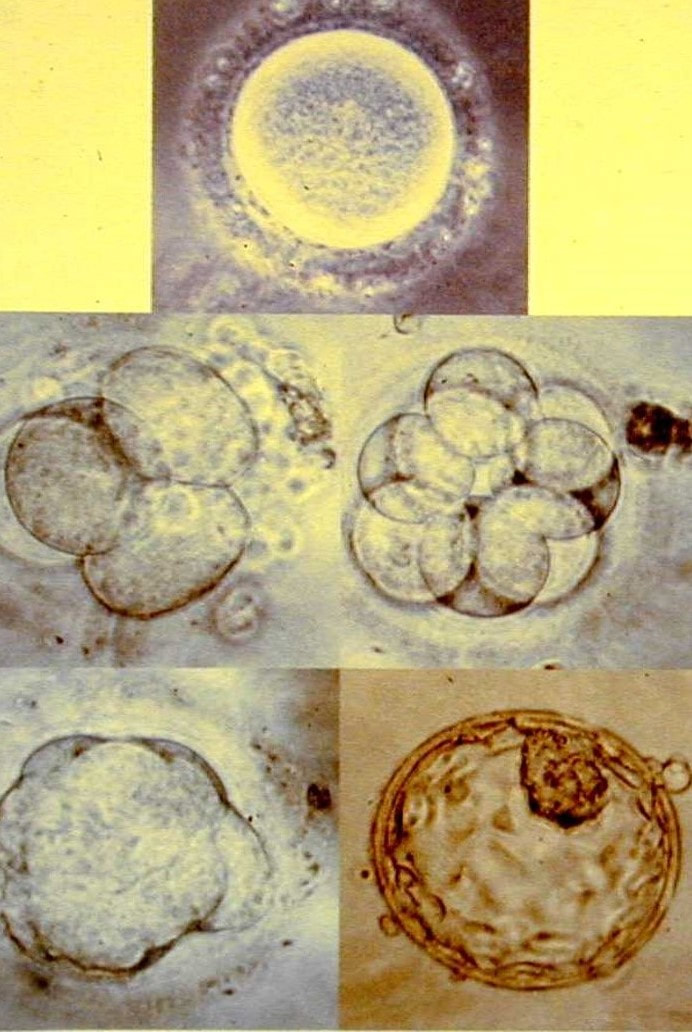

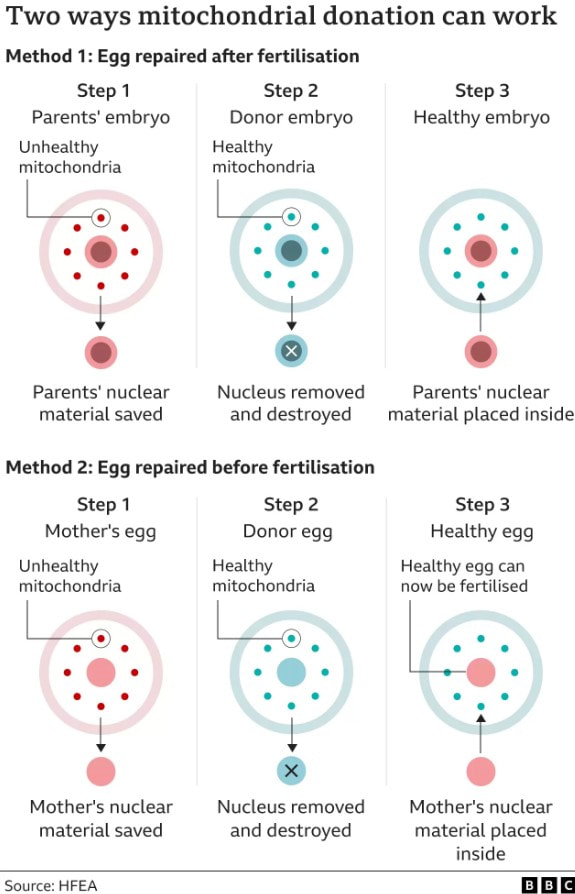

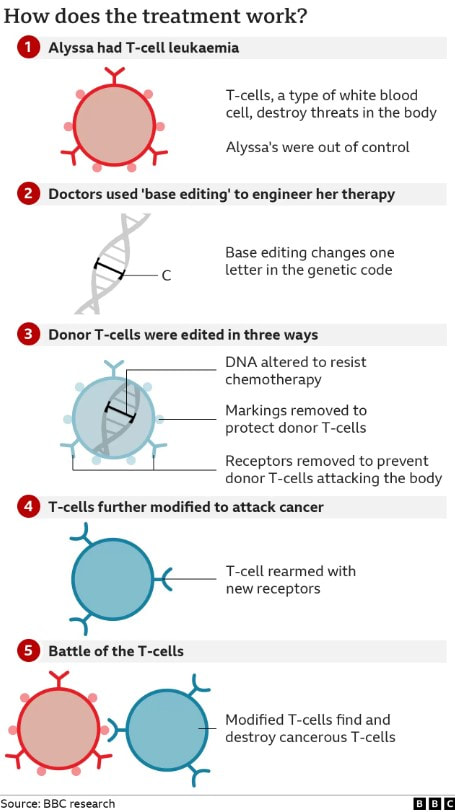

Either (1) the LDL method for estimating cosmic distances is flawed, Or (2) our best model of the Universe (the Λ-CMD model) is wrong. Either way, it looks like this divergence in the estimates of Ho will provoke further experimental work to try to understand and resolve the issue. It is certainly the case that JWST’s greater angular resolution can aid in trying to resolve the crisis by investigating the various rungs of the LDL in unprecedented detail. At the time of writing there are various proposals for telescope time in the pipeline to look at this, and some observational campaigns underway. Alternatively, if the Λ-CMD model turns out to be flawed, it will be a shock, but then hopefully it will present an opportunity for scientists to learn lots of new physics. As always, things are not straight-forward and the ‘crisis’ may have far-reaching implications for our understanding of the Universe in which we live. Graham Swinerd Southampton, UK August 2023 (1) Graham Swinerd and John Bryant, From the Big Bang to biology: where is God?, Kindle Direct Publishing, 2020. (2) The Kelvin temperature scale is identical to the Celsius scale but with zero Kelvin at absolute zero (-273 degrees Celsius). Hence, for example, water freezes at +273 K and boils at +373 K. (3) A millidegree is 1 thousandths of a degree.  Electron micrograph of a human lymphocyte. Credit: taken from hazell11bio.blogspot.com. Electron micrograph of a human lymphocyte. Credit: taken from hazell11bio.blogspot.com. John writes … Cells and organelles In order to comment on that headline, I need to start with a spot of cell biology. In all ‘eukaryotic’ organisms (i.e., all organisms except bacteria and archaea), cells contain within them several subcellular compartments or organelles. Two of these organelles, the mitochondrion (plural, mitochondria) and in plants, the chloroplast, contain a small number of genes, a reminder of their evolutionary past. These organelles were originally endosymbiotic within cells of the earliest eukaryotes. During subsequent evolution, the bulk of the endosymbionts’ genes have been taken over by the host’s main genome in the nucleus, leaving just a small proportion of their original genomes in the organelles. In mammals for example, the genes in the mitochondria make up about 0.0018 (0.18%) of the total number of genes.  Diagram of ATP molecule. Diagram of ATP molecule. When mitochondria go wrong The main function of mitochondria is to convert the energy from food into a chemical energy currency called ATP. The average human cell makes and uses 150 million ATP molecules per day, emphasizing the absolutely key role that mitochondria play in the life of eukaryotes. This brings us back to mitochondrial genes. Although few in number, they are essential for mitochondrial function. Mutations in mitochondrial genes may lead to a lethal loss of mitochondrial function or may, in less severe cases, cause some impairment in function. During my time at the University of Exeter, I had direct contact with one such case. A very able student had elected to undertake his third-year research project with me and a colleague. However, towards the end of his second year his eyesight began to fail such that, by the start of his third year his vision was so impaired that a lab-based project was impossible. In a matter of a few months his eyesight had declined to about 15% of what he started with. The cause was a mitochondrial genetic mutation affecting the energising of the retina and hence causing impaired vision (2), a condition known as Leber hereditary optic neuropathy, symptoms of which typically appear in young adults. I need to say serious though it was for the student, this is one of milder diseases caused by mutations in a mitochondrial gene; many have much more serious effects (3), although I also need to say that diseases/syndromes based on mitochondrial genes are very rare. Is it GM or isn’t it? This brings us back to the BBC’s headline. Mitochondrial genes are only inherited from the mother. In fertilisation, the sperm delivers its full complement of nuclear genes to the egg cell but its mitochondria do not feature in the subsequent development of the embryo. In the early years of this century, scientists in several countries developed methods for replacing ‘faulty’ mitochondria in an egg cell or in a fertilised egg (one-cell embryo) with healthy mitochondria. This provides a way for a woman who knows she carries a mitochondrial mutation to avoid passing on that mutation to her offspring. However, there is another issue to deal with here. The replacement of one set of mitochondrial genes with another is clearly an example of genetic modification (GM), albeit an unusual example. In the UK, the Human Fertilisation and Embryology Act, whilst allowing spare embryos to be used in experimental procedures involving GM, prohibited the implantation of GM embryos to start a pregnancy. This would include embryos with ‘swapped’ mitochondrial DNA (or embryos derived from egg cells with swapped mitochondrial DNA). In order to bring this procedure into clinical practice, the law had to be changed which duly happened in 2015. Prior to the debates that led to the change in law, pioneers of the technique gave several presentations to explain what was involved; indeed, I was privileged to attend one of those presentations addressed to the bioscience and medical science communities. It was during this time that some opposition to the technique became apparent and the phrases ‘three-parent IVF’ and ‘three-parent babies’ became widespread both among opponents of the procedure and in the print and broadcast media.  Credit: John Bryant. Credit: John Bryant. Should we, or shouldn’t we? I will come back to the opposition in a moment but before that I want to ask whether our readers think that the use of the term ‘three-parent’ is justifiable. My friend, co-author and fellow-Christian, Linda la Velle and I discuss this on pages 52 to 54 of Introduction to Bioethics. In our view, the phrase is inaccurate and misleading and I was pleased to see that the recent BBC headline (in the title of this post) did not use it, even if the headline still conveyed slightly the wrong impression.  ‘Designer baby’. Credit: taken from www.makeuseof.com. ‘Designer baby’. Credit: taken from www.makeuseof.com. Returning to consider the opposition to the technique, there were some who believed that allowing it would open the gate to wider use of GM techniques with human embryos, leading to the possibility of ‘designer babies’. However, there were other reasons for opposition. Since its inception with the birth of Louise Brown in 1978, IVF has had its opponents who believe that it debases the moral status of the human embryo. According to their view, which I need to say, is not widely held (4), the one-cell human embryo should be granted the same moral status as a born human person, from the ‘moment of conception’. There can be no ‘spare’ embryos because that would be like saying there are spare people. This brings us to the two different techniques described in the recent BBC article. How is it done? As hinted at briefly already, there are two methods for removing faulty mitochondria and replacing them with fully functioning mitochondria, as shown in the HFEA diagram (below) reproduced by the BBC. In passing, I note that the ability to carry out these techniques owes a lot to what was learned during the development of mammalian cloning. In the first technique, an egg (5) is donated by a woman who has normal mitochondria. This egg is fertilised by IVF as is an egg from the prospective mother who is at risk of passing on faulty mitochondria. We now have two fertilised eggs/one-cell embryos. The nucleus (which contains most of the genes) is removed from the embryos derived from the donated egg and is replaced with the nucleus from the embryo with faulty mitochondria. Thus nuclear transfer has been achieved, setting up a ‘hybrid’ embryo (nucleus from the embryo with faulty mitochondria, ‘good’ mitochondria in the embryo derived from the donated egg). The embryo can then be grown on for two or three days before implantation into the prospective mother’s uterus, hopefully to start a pregnancy. However, readers will immediately realise that this method effectively involves destruction of a human one-cell embryo, raising again objections from those who hold the view that the early embryo has the same moral status as a person (see above). This brings us to the second method. It again involves donation of an egg with healthy mitochondria but this is enucleated without being fertilised. Nuclear transfer from an egg of the prospective mother with faulty mitochondria then creates a ‘hybrid’ egg which is only then fertilised by IVF and cultured for a few days prior to implantation into the uterus of the prospective mother. The technique does not inherently involve destruction of a one-cell human embryo. However, since the procedure, including IVF, will be carried out with several eggs, there still remains the question of spare embryos, mentioned above. Further, since this technique leads to less success in establishing pregnancies than the first technique, it is likely not to be the technique of first choice in these situations. So when was that, exactly? The BBC report which stimulated me to write this blog talked of a ‘UK First’ but that cannot mean that the world’s first case was in the UK. There are well-documented reports from at least three different countries and going back to 2016, of babies born following use of nuclear transfer techniques. The headline must imply that this was the first case in the UK. However, following the change in the law (mentioned above), in 2017 the Human Fertilisation and Embryology Authority (HFEA) licensed the Newcastle Fertility Centre to carry out this procedure. It was predicted that the first nuclear transfer/mitochondrial donation baby would be born in 2018 (although we emphasise that because of the rarity of these mitochondrial gene disorders the number of births achieved by this route will be small). So, when was the first such baby actually born in the UK. The answer is that we do not know. The HFEA, which regulates the procedure, is very protective of patient anonymity and does not release specific information that might identify patients. All we know is that ‘fewer than five’ nuclear transfer/mitochondrial donation babies have been born and that the births have taken place between 2018 and early 2023 – so, in respect of the date of this ‘UK First’ we are none the wiser. John Bryant

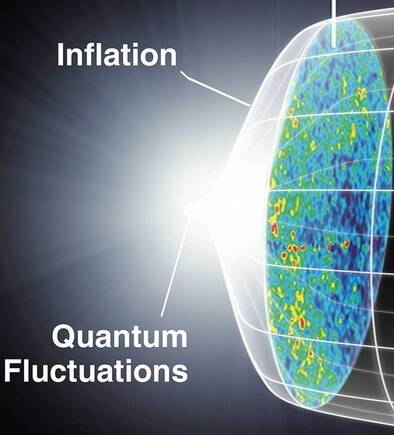

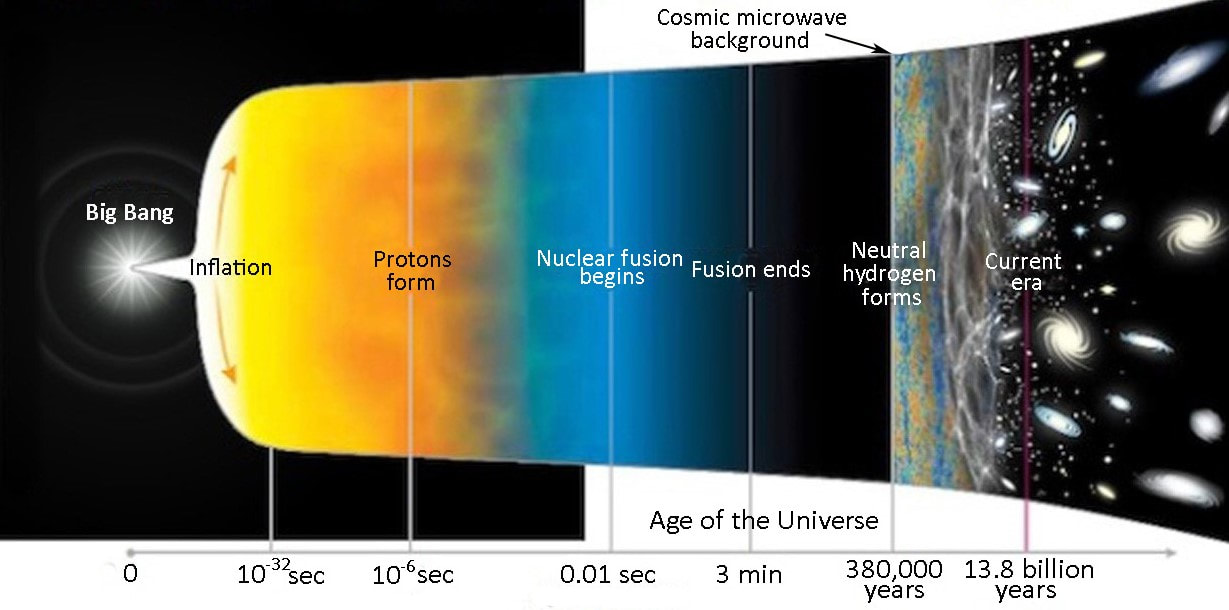

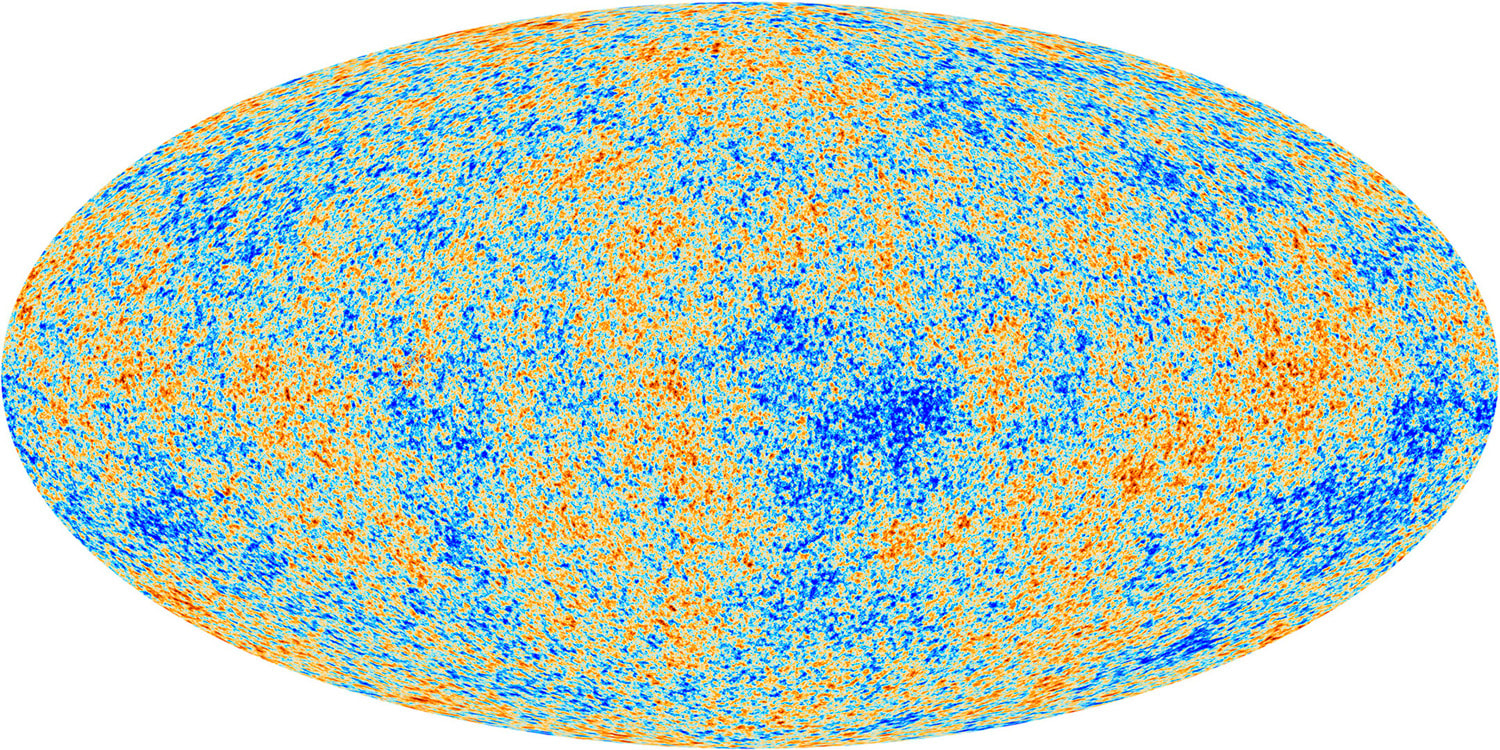

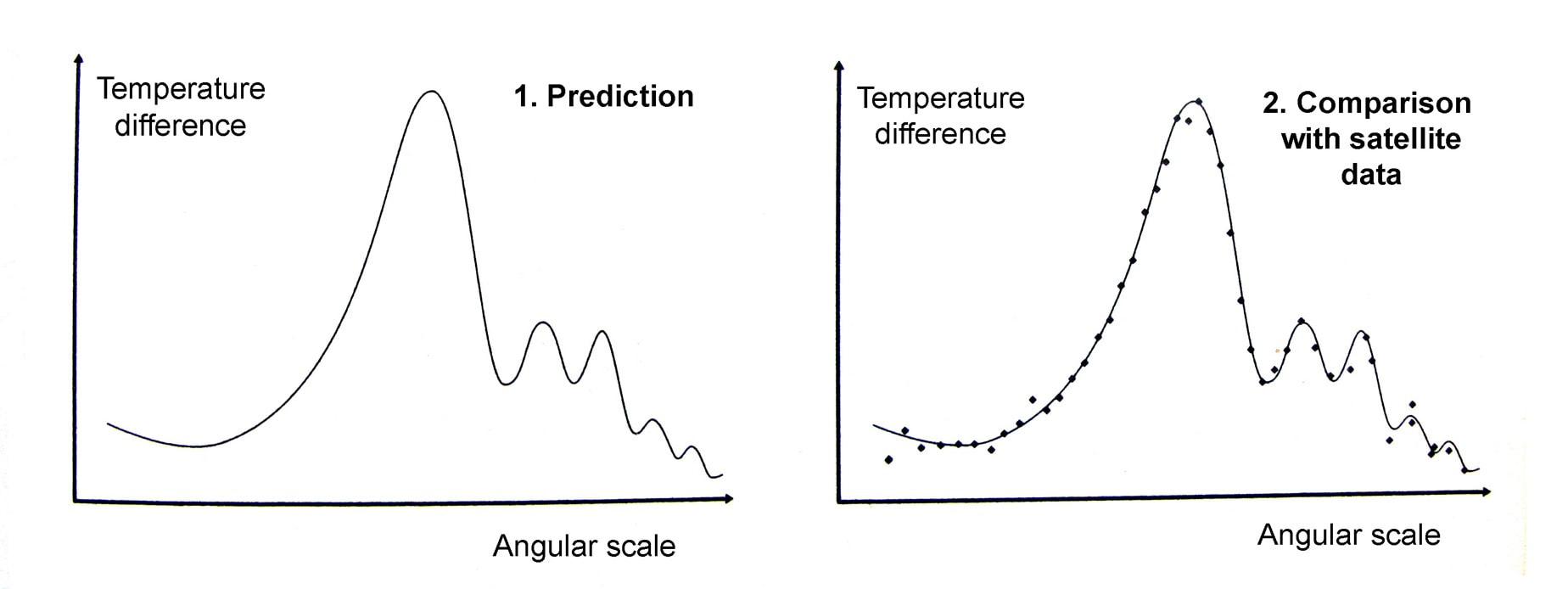

Topsham, Devon 28 June 2023 (1) Baby born from three people’s DNA – BBC News. (2) My colleague and I were able to offer him a computer-based project and the university’s special needs office provided all that he needed to complete his degree. (3) See, for example: Mitochondrial DNA Common Mutation Syndromes, Children’s Hospital of Philadelphia – chop.edu. (4) In Life in Our Hands (IVP, 2004) and Beyond Human? (Lion Hudson, 2012), I discuss the wide range of views on this topic among Christians. (5) Although I use the singular for the sake of convenience, several donated and several maternal eggs are used in these procedures. Graham writes … This question has occupied cosmologists since the theory of cosmic inflation was first developed by Alan Guth in 1979, who realised that empty space could provide a mechanism to account for the expansion of the Universe. In the theory it is assumed that a tiny volume of space-time in the early Universe – very much smaller than a proton – came to be in a ‘false vacuum’ state. Effectively, in this state space is permeated by a large, constant energy density. The effect of this so-called ‘inflaton field’ is to drive a rapid expansion of space-time for as long as the false vacuum state exists. I won’t go into the detail of how this happens here (1), but as a consequence the tiny nugget of space-time grows exponentially, before the energy density decays to acquire a ‘true vacuum’ state once again, bringing the inflationary period to an end. Theoretically, this expansion takes place between roughly 10 to the power -36 (10e-36) sec (2) and 10e-32 sec after the bang (ATB) – periods of time that makes the blink of an eye seem like an eternity. To generate a universe with the characteristics we observe today, the expansion factor must be of the order of 10e+30. To give an impression of what this degree of expansion means, if you expand a human egg cell by a factor of 10e+25, you get something about the size of the Milky Way galaxy! The diagram below helps, I think, to illustrate the time line of developments ATB. Clearly the horizontal time axis is not linear!  Georges Lemaître (left) with Albert Einstein. Lemaître was a Belgium priest and physicist. Credit: public domain. Georges Lemaître (left) with Albert Einstein. Lemaître was a Belgium priest and physicist. Credit: public domain. To me, the events of the inflation era seem extraordinary, prompting the question in the title of this post. However unlikely these events may seem, the cosmic inflation paradigm has been very successful in resolving problems which have dogged the ‘conventional’ theory of the big bang as originally proposed by Georges Lemaître in 1927 (3), for example, the horizon and flatness problems. A summary of these issues, and others, can be found here. When we use Einstein’s field equations of general relativity, we make an assumption that on cosmic scales of billions of light years, the Universe is homogeneous and isotropic. In other words, it appears the same at all locations and in all directions. These simplifying symmetries are what allows us to use the mathematics to study the large-scale dynamics of the Universe. A nice analogy is to think of the Universe as a glass of water, and each entire galaxy as a molecule of H2O. In using the equations, we are examining the glass of water, and ignoring the small-scale molecules. However, cosmology at some point has to come to terms with the idea that when you examine the Universe at smaller scales you find clumpy structures like galaxies. And here we are faced with a conundrum. The primary question addressed in this post is along the lines of ‘what is the origin of the inhomogeneities that led to the development of the clumpy structures like galaxies and stars that we observe today?’ Without these, clearly we would not be here to contemplate the issue. If we assume that cosmic inflation occurred, then the initial ‘fireball’ would be microscopically tiny. Its extreme temperature and energy density, just ATB but before the inflationary expansion, would be able to reach equilibrium smoothing out any variations. So, the question posed above becomes key. If the resulting density and temperature of the inflated universe was uniform, then how did galaxies form? Where did the necessary inhomogeneities come from? It is gratifying that the inflation theory offers progress in answering this question – indeed, remarkably, the initial non-uniformities that gave rise to galaxies and stars may have come from quantum mechanics!  Werner Heisenberg. Credit: Bundesarchiv / public domain. Werner Heisenberg. Credit: Bundesarchiv / public domain. This magnificent idea arises from the interplay between inflationary expansion and the quantum uncertainty principle. The uncertainty principle, originally proposed by Werner Heisenberg, says that there are always trade-offs in how precisely complementary physical quantities can be determined. The example most often quoted involves the position and speed of a sub-atomic particle. The more precisely its position is determined, the less precisely it speed can be determined (and the other way round). However, the principle can also be applied to fields. In this case, the more accurate the value of a field is determined, the less precisely the field’s rate of change can be established, at a given location. Hence, if the field’s rate of change cannot be precisely defined, then we are unable to determine the field’s value at a later (or earlier) time. At these microscopic scales the field’s value will undulate up or down at this or that rate. This results in the observed behaviour of quantum fields – their value undergoes a characteristic frenzied, random jitter, a characteristic often referred to as quantum fluctuations. This takes us back then to the inflaton field that drives the dramatic inflationary expansion of the early Universe. In the discussion in the book (1), as the inflationary era is drawing to a close it is assumed that the energy of the field decreased and arrived at the true vacuum state at the same time at each location. However, the quantum fluctuations of the field’s value means that it reaches its the value of lowest energy at different places at slightly different times. Consequently, inflationary expansion shuts down at different locations at different moments, resulting in differing degrees of expansion at each location, giving rise to inhomogeneities. Hence, inflationary cosmology provides a natural mechanism for understanding how the small-scale nonuniformity responsible for clumpy structures like galaxies emerge in a Universe that on the largest scales is comprehensively homogeneous.  The cosmic microwave background was created at around 380,000 years ATB. 3D space is represented here as a 2D disk imprinted with temperature fluctuations. Credit: Source unknown. The cosmic microwave background was created at around 380,000 years ATB. 3D space is represented here as a 2D disk imprinted with temperature fluctuations. Credit: Source unknown. So quantum fluctuations in the very early Universe, magnified to cosmic size by inflation, become the seeds for the growth of structure in the Universe! But is all this just theoretical speculation, or can these early seeds be observed? The answer to this question is very much ‘yes!’. In 1964 two radio astronomers, Arno Penzias and Robert Wilson made a breakthrough discovery (serendipitously). The two radio astronomers detected a source of low energy radio noise spread uniformly across the sky, which they later realised was the afterglow of the Big Bang. This radiation, which became know as the cosmic microwave background (CMB), is essentially an imprint of the state of the Universe at around 380,000 years ATB (4). Initially the Universe was a tiny, but unimaginably hot and dense ‘fireball’, in which the constituents of ‘normal matter’ – protons, neutrons and electrons – were spawned. Initially electromagnetic (EM) radiation was scattered by its interaction with the charged particles so that the Universe was opaque. However, when the temperature decreased to about 3,000 degrees Celsius, atoms of neutral hydrogen were able to form, and the fog cleared. At this epoch, the EM radiation was released to propagate freely throughout the Universe. Since that time the Universe has expanded by a factor of about one thousand so that the radiation’s wavelength has correspondingly stretched, bringing it into the microwave part of the EM spectrum. It is this remnant radiation that we now call the CMB. It is no longer intensely hot, but now has an extremely low temperature of around 2.72 Kelvin (5). This radiation temperature is uniform across the sky, but there are small variations – in one part of the sky it may be 2.7249 Kelvin and at another 2.7251 Kelvin. So the radiation temperature is essentially uniform (the researchers call it isotropic), but with small variations as predicted by inflationary cosmology.  Max Planck. Credit: public domain. Max Planck. Credit: public domain. Maps of the temperature variations in the CMB have been made by a number of satellite missions with increasing accuracy. The most recent and most accurate was acquired by ESA’s Planck probe, named in honour of Max Planck, one of the pioneering scientists that developed the theory of quantum mechanics. The diagram below displays the primary results of the Planck mission, showing a colour coded map of the CMB temperature fluctuations across the sky. Note that these variations are small, amounting to differences at the millidegree level (6). This map may not look very impressive to the untrained eye, but it represents a treasure trove of information and data about the early Universe which has transformed cosmology from the theoretical science it used to be to a science based on a foundation of observational data. The final icing on the cake is what happens when you carry out calculations based on the predictions of quantum mechanics that we discussed earlier. The details are not important here, but diagram 1 shows the prediction where the horizontal axis shows the angular separation of two points on the sky, and the vertical axis shows their temperature difference. In diagram 2 this prediction is compared with satellite observations and as you can see there is remarkable agreement! This success has convinced many physicists of the validity of inflationary cosmology, but many remain to be convinced. Despite the remarkable success of the theory, the associated events that occurred 13.8 billion years ago seem to me to be extraordinary. And then, of course, there is also the issue of the origin and characteristics of the inflaton field that kicked it all off.

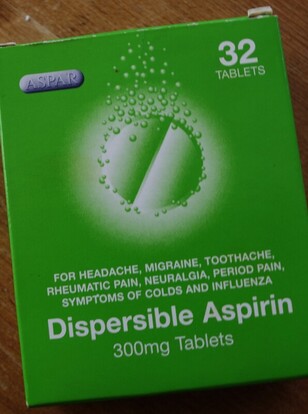

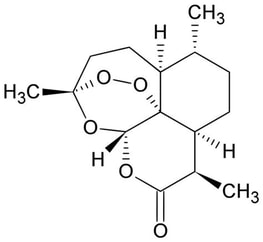

Despite all this, however, I am blown away by the notion that the 100 billion or more galaxies in our visible Universe may be ‘nothing but quantum mechanics writ large across the sky’, to quote Brian Greene (7) Graham Swinerd Southampton, UK May 2023 (1) Graham Swinerd and John Bryant, From the Big Bang to Biology – where is God?, Kindle Direct Publishing, 2020, see Section 3.6 for more detail. (2) In the website editor, I am unable to use the usual convention of employing superscripts to represent ‘10 to the power of 30’ for example. In the text I have used the notation 10e+30 to denote this. (3) Georges Lemaître’s publication of 1927 appeared in a little-known Belgium journal. It was subsequently published in 1931 in a journal of the Royal Astronomical Society. (4) Graham Swinerd and John Bryant, From the Big Bang to Biology: where is God?, Kindle Direct Publishing, 2020, pp. 60 – 63 for more details about the CMB. (5) The Kelvin temperature scale is identical to the Celsius scale but with zero Kelvin at absolute zero (-273 degrees Celsius). Hence, for example, water freezes at +273 Kelvin and boils at +373 Kelvin. (6) A millidegree is 1 thousandths of a degree. (7) Brian Green, The Fabric of the Cosmos, Penguin Books, 2004, pp. 308-309. John writes … ‘Cardiff scientists look at honey as drug alternative’ (1) – a headline that intrigued both me and Dave Piper of Trans World Radio (TWR-UK), leading to his request to interview me about it (2). On the surface it seems rather strange or even crazy but there are good reasons why it may not be as strange as it appears. I will return to this later but first I want to look more generally at our dependence on plants for important pharmaceuticals. The World Health Organisation (WHO) publishes a long list of Essential Medicines, last updated in 2021 (3); over 100 of them were first derived from or are still extracted from flowering plants, including the examples I discuss below. In the immortal words of Michael Caine ‘Not a lot of people know that’. Over the millennia of human existence, people have turned to the natural world for help in combatting a wide range of ailments from everyday aches and pains to serious illnesses. Many of these folk remedies were not, as we would now say, ‘evidence-based’: they did not actually work. Equally however, many have now been shown to point to the existence of genuinely therapeutic chemicals, as shown by four examples. I have discussed them in some detail because I really want our readers to understand how dependent we are on the natural biodiversity of the plant kingdom – a feature that is constantly under threat.  Willow. Willow. Aspirin. Accounts of the use of extracts of willow (Salix) leaves and bark to relieve pain and to treat fevers occur as far back as 2,500 BCE, as evidenced by inscriptions on stone tablets from the Sumerian period. Willow bark was also used by the ancient Egyptians while Hippocrates (460-377 BCE) recommended an extract of willow bark for fever, pain and child-birth. The key chemical in willow has the common name of salicin, first purified in the early 19th century. In the human body, this is metabolised to salicylic acid which is the bioactive compound that has the medicinal effects. Meadowsweet (Spirea ulmaria) leaves also contain salicin and the medicinal properties of this plant had led in earlier centuries to the Druidic Celts regarding it as sacred.  Salicylic acid was synthesised in the lab for the first time in 1859 and the pure form was used with patients shortly after that. Unfortunately, the acid has a very unpleasant taste and also irritates the stomach so is not ideal for use as a regular medication. However, in 1897, a team led by Dr Felix Hoffman at Friedrich Bayer and Co. in Germany managed to synthesise a derivative, acetyl-salicylic acid which has a less nasty taste and is much less of an irritant than the parent compound. After successful clinical trials, the compound was registered as aspirin in 1899. This was the first drug to be made synthetically and the event is regarded as the birth of the pharmaceutical industry. Interestingly, the place of acetyl-salicylic acid in the WHO list is based, not on its pain-killing properties but on its action as a blood-thinner. Morphine. Morphine is a powerful narcotic and pain killer that is synthesised by the opium poppy (Papaver somniferum); extracts of the plant, known as opium, have been used medicinally and recreationally for several thousand years, both in Europe and in what we now call the Middle East. Indeed, as long ago as 4,000 BCE in Mesopotamia, the poppy was called the ‘plant of joy.’ In Europe, the medicinal use of opium, often as a tincture or as a solution in alcohol was popularised from the 16th century onwards. In addition, the seeds or extracts of seeds, often in the form of a poppy seed cake or mixed with milk or honey have been given to babies and small children to help them sleep (4) (the Latin name of the plant means sleep-inducing poppy).  Opium poppy. Credit: Louise Joly via Wikipedia Commons. Opium poppy. Credit: Louise Joly via Wikipedia Commons. The active ingredient of opium is morphine which was first isolated by a German pharmacist, Freidrich Sertürner in 1803. It was thus the first medicinally active ingredient to be isolated from a plant (as opposed to salicylic acid – see above – which was the first to be chemically synthesised). It was originally called morphium, after the Greek god of dreams, Morpheus but the name was changed to morphine when Merck started to market it in 1827. Over the past 15 years methods have been developed for its chemical synthesis but none of these are as yet anything like efficient enough to meet the demand for the drug and so it is still extracted from plant itself.  Structure of Artemisinin: Public Domain. Structure of Artemisinin: Public Domain. Artemisinin. In China, extracts of the sweet wormwood plant (Artemisia annua) have been used to treat malaria for about 2,000 years. The active ingredient was identified as an unusual and somewhat complex sesquiterpene lactone (illustrated, for those with a love of organic chemistry!) which was named artemisinin. It was added to the WHO list after extensive clinical trials in the late 20th century. Artemisinin has not been chemically synthesised ‘from scratch’ and initial efforts to increase production involved attempts to breed high-yielding strains of A. annua. However, it proved more efficient to transfer the relevant genes into the bacterium Escherichia coli or yeast cells, both of which can be grown on an industrial scale (5). The genetically modified cells synthesise artemisinic acid which is easily converted into artemisinin in a simple one-step chemical process.  Rosy Periwinkle. Credit: Royal Botanic Gardens, Kew via Creative Commons. Rosy Periwinkle. Credit: Royal Botanic Gardens, Kew via Creative Commons. Vinblastine and Vincristine. These two anti-cancer drugs were isolated from leaves of the Madagascar periwinkle (6) (which is not actually confined to Madagascar). The original Latin name for this plant was Vinca rosea (hence the names of the two drugs) but is now Catharanthus roseus. In Madagascar, a tea made with the leaves had been used as a folk remedy for diabetes and the drug company, Eli Lilly took an interest in the plant because of this. However, controlled trials in the late 1950s and early 1960s gave, at best, ambiguous results and no anti-diabetic compounds were found in leaf extracts. However, the company did detect a compound that prevented chromosomes from separating and thus inhibited cell division. The compound was named vincristine. Further, a research team at the University of Western Ontario discovered a similar compound with similar inhibitory properties; this was named vinblastine. Because the two compounds inhibit cell division, they were trialled as anti-cancer drugs and are now part of an array of pharmaceuticals which may be used in chemotherapy (7). In past 15 years, both have been chemically synthesised from simple precursors but we still rely on extraction form plants for the bulk of the required supply. I also want to emphasise that these are far from being the only anti-cancer drugs derived from plants, discussed by Dr Melanie-Jayne Howes of the Royal Botanic Gardens, Kew (8). As she says, plants are far better at synthetic chemistry than humans! Back to the beginning. In the book we describe the natural world as a network of networks of interacting organisms. Given that context, we need to think about the natural function of these compounds that humans make use of in medicine. A brief overview suggests that many of them have a protective role in the plant. This may be protection against predators or disease agents or against the effects of non-biological stresses. Thinking about the former brings us back to the topic that I started with, namely looking for ‘drug alternatives’. The story starts with another interaction, namely that the fungus Penicillium notatum synthesises a compound that inhibits the growth and reproduction of bacteria; that compound was named penicillin and was the first antibiotic to be discovered (in 1928). However, as with the examples of drugs from plants mentioned earlier, we see hints of the existence of anti-biotics in ancient medical practices. In China, Egypt and southern Europe, mouldy bread had been used since pre-Christian times to prevent infection of wounds and the practice of using ‘moulds’ (probably species of Penicillium or Rhizopus) to treat infections was documented in the early 17th century by apothecary/herbalist/botanist John Parkinson who went on to be appointed as Apothecary to King James I. It is not just plants that supply us with essential drugs: the fungus kingdom also comes up with the goods. Antibiotics are a subset of the wider class of anti-microbials, chemicals that are effective against different types of micro-organisms, including micro-fungi, protists and viruses. Artemisinin, mentioned above, is thus an anti-microbial. Many anti-microbials are – or were initially – derived from natural sources while others have been synthesised by design to target particular features of particular micro-organisms. There is no doubt that their use has saved millions of lives. However, we now have a major problem. The over-use of antibiotics and other anti-microbials in medicine, in veterinary medicine and in agricultural animal husbandry has accelerated the evolution of drug-resistant micro-organisms against which anti-microbials are ineffective. The WHO considers this to be a major threat to our ability to control infectious diseases and hence there is a widespread search for new anti-microbials. The search is mainly focussed on the natural world although there are some attempts to design drugs from scratch using sophisticated synthetic chemistry. The Cardiff team who headlined this article are looking at plants and at products derived from plants. The ‘drug alternatives’ mentioned in the BBC headline refer to the team’s hopes of finding new anti-microbials. They have noted that in earlier centuries, honey had been used in treating and preventing infection of wounds and they wonder whether it contains some sort of anti-microbial compound. The plants that have contributed to a batch of honey can be identified by DNA profiling of any cellular material, especially pollen grains, in the honey. The team is especially interested in honey derived or mainly derived from dandelion nectar because dandelions synthesise a range of protective chemicals and in herbal medicine are regarded as having health-giving properties. So … the hunt is on and we look forward to hearing more news from Cardiff in due course. John Bryant

Topsham, Devon April 2023 All images by John Bryant, except those credited otherwise. (1) Cardiff scientists look at honey as drug alternative – BBC News. (2) www.youtube.com. (3) World Health Organisation model list of essential medicines – 22nd list, 2021. (4) This was certainly practised in parts of central Europe in the mid-20th century. (5) See J. A. Bryant & L. La Velle, Introduction to Bioethics (2nd edition), Wiley, 2018, p. 200. (6) See J.A. Bryant & L. La Velle, Introduction to Bioethics (2nd edition), Wiley, 2018, pp. 241-242. (7) Interestingly, a recent paper claims that traditional Indian medicine has made use of the anti-tumour properties of C. roseus: Mishra & Verma, International Journal of Research in Pharmacy and Pharmaceutical Sciences, 2017, Vol. 2, pp. 20-23. (8) Plants and the evolution of anticancer drugs – Royal Botanic Gardens Kew. Graham writes … Isaac Newton was the first to really appreciate the concept that mathematics has the power to reveal deep truths about the physical reality in which we live. Combining his laws of motion and gravity, he was able to construct equations which he could solve having also developed the basic rules of what we now call calculus (1). A remarkable individual achievement, which unified a number of different phenomena – for example, the fall of an apple, the motion of the moon, the dynamics of the solar system – under the umbrella of one theory.  Unification of gravity and the quantum still evades resolution. Credit SLAC National Accelerator Lab Unification of gravity and the quantum still evades resolution. Credit SLAC National Accelerator Lab Coming up to the present day, this pursuit of unification continues. In summary, the objective now is to find a theory of everything (TOE), which is something that has occupied the physics community since the development of the quantum and relativity theories in the early 20th century. Of course, the task now is somewhat more challenging than that of Newton. He had ‘simply’ gravity to contend with, whereas now, physicists have so far discovered four fundamental forces – gravity, electromagnetism, the weak and strong nuclear forces – which govern the way the world works. Along the way in all this, we have accumulated a significant understanding of the Universe, both on a macroscopic scale (astrophysics, cosmology) and on a micro scale (quantum physics).  Why do the laws of physics appear to be 'bio-friendly'? Credit: Source unknown. Why do the laws of physics appear to be 'bio-friendly'? Credit: Source unknown. One surprising consequence of all this, is that we have discovered that the Universe is a very unlikely place. So, what do I mean by this? The natural laws of physics and the values of the many fundamental constants that specify how these laws work appear to be tuned so that the Universe is bio-friendly. In other words, if we change the value of just one of the constants by a small amount, something invariably goes wrong, and the resulting universe is devoid of life. The extraordinary thing about this tuning process is just how finely-tuned it is. American physicist Lee Smolin (2) claims to have quantitatively determined the degree to which the cosmos is finely tuned when he says: “One can estimate the probability that the constants in our standard theories of the elementary particles and cosmology would, were they chosen randomly, lead to a world with carbon chemistry. That probability is less than one part in 10 to the power 220.” The reason why this is so significant for me, is that this characteristic of the Universe started me on a personal journey to faith some 20 years ago. Clearly, in itself, the fine-tuning argument does not prove that there is (or is not) a God, but for me it was a strong pointer to the idea that there may be a guiding intelligence behind it all. At that time, I had no religious propensities at all and the idea of a creator God was anathema to me, but even I could appreciate the significance of the argument without the help of Lee Smolin’s mysterious calculations. This was just the beginning of a journey for me, which ultimately led to a spiritual encounter. At the time, this was very unwelcome, as I had always believed that the only reality was physical. However, God had other ideas, and a belief in a spiritual realm has changed my life. However, that is another story, which I tell in some detail in the book if you are interested (3). The purpose of this blog post is to pose a question. When we look at the world around us, and at the Universe at macro and micro scales, we can ask: did it all happen by blind chance, or is there a guiding hand – a source of information and intelligence (“the Word”) – behind it all? There are a number of thought-provoking and intriguing examples of fine-tuning discussed in the book (3), but here I would like to consider a couple of topics not mentioned there, both of which focus on the micro scale of quantum physics.  If the protons and neutrons in this picture were 10 cm in diameter, then the quarks and electrons would be less than 0.1 mm in size and the entire atom would be about 10 km across. Credit: Source unknown. If the protons and neutrons in this picture were 10 cm in diameter, then the quarks and electrons would be less than 0.1 mm in size and the entire atom would be about 10 km across. Credit: Source unknown.

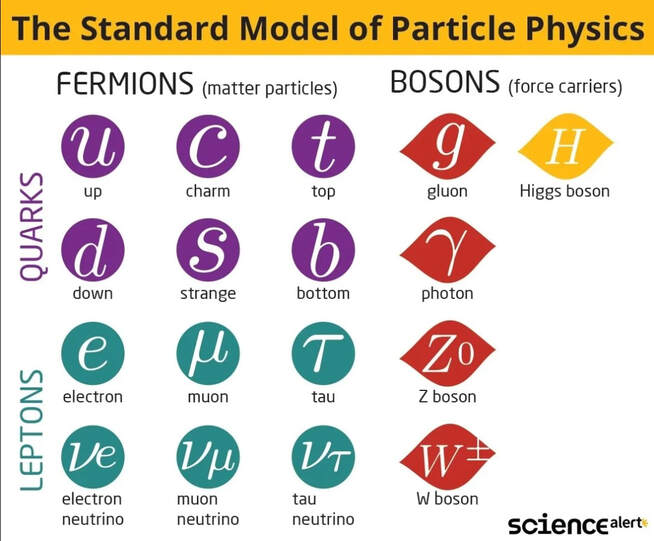

There is general agreement among physicists that something extraordinary occurred then, which in the standard model of cosmology is called the ‘Big Bang’ (BB). There is debate as to whether this was the beginning of our Universe, when space, time, matter and energy, came into existence. Some punters have proposed other scenarios; that perhaps the BB marked the end of one universe and the beginning of another (ours), or that perhaps the ‘seed’ of our Universe had existed in a long-lived quiescent state until some quantum fluctuation had kicked off a powerful expansion – the possibilities are endless. But one thing we do know about 13.8 billion years ago is that the Universe then was very much smaller than it is now, unimaginably dense and ultra-hot. The evidence for this is incontrovertible, in the form of detailed observations of the cosmic microwave background (4). If we adopt the standard model, the events at time zero are still a mystery as we do not have a TOE to say anything about them. However, within a billionth of a billionth of a billionth of second after the BB, repulsive gravity stretched a tiny nugget of space-time by a huge factor – perhaps 10 to the power 30. This period of inflation (5) however was unstable, and lasted only a similarly-fleeting period of time. The energy of the field that created the expansion was dumped into the expanding space and transformed (through the mass-energy equivalence) into a soup of matter particles. It is noteworthy that we are not sure what kind of particles they were, but we do know that, at this stage of the process, they were not the ‘familiar’ ones that make up the atoms in our body. After a period of a few minutes, during which a cascade of rapid particle interactions took place throughout the embryonic cosmos, a population of protons, neutrons and electrons emerged. In these early minutes of the universe, the energy of electromagnetic radiation dominated the interactions and the expansion dynamics, disrupting the assembly of atoms. However, thereafter, there was a brief window of opportunity when the Universe was cool enough for this disruption to cease, but still hot enough for nuclear reactions to take place. During this interval, a population of about 76% hydrogen and 24% helium resulted, with a smattering of lithium (6). In all this, an important feature is the formation of stable proton and neutron particles, without which, of course, there would be no prospect of the development of stars, galaxies and, ultimately, us. To ‘manufacture’ a proton, for example, you need two ‘up’ quarks and one ‘down’ quark (to give a positive electric charge), stably and precisely confined within a tiny volume of space by a system of eight gluons. Without dwelling on the details, the gluons are the strong force carriers which operate between the quarks using a system of three different types of force (arbitrarily labelled by three colours). Far from being a fundamental particle, the proton is comprised of 11 separate particles. The association of quarks and gluons is so stable (and complex) that quarks are never observed in isolation. Similarly, neutrons comprise 11 particles with similar characteristics, apart from there being one ‘up’ quark and two ‘down’ quarks, to ensure a zero electric charge. So what are we to say about all this? Is it likely that all this came about by blind chance? Clearly, the processes I have described is governed by complex rules – the laws of nature – to produce the Universe that we observe. So, in some sense the laws were already ‘imprinted’ on the fabric of space-time at or near time zero. But in the extremely brief fractions of a second after the BB where did they come from? Who or what is the law giver? Rhetorical questions all, but there are a lot of such questions in this post to highlight the notion that such complex behaviour (order) is unlikely to occur simply by ‘blind chance’.