|

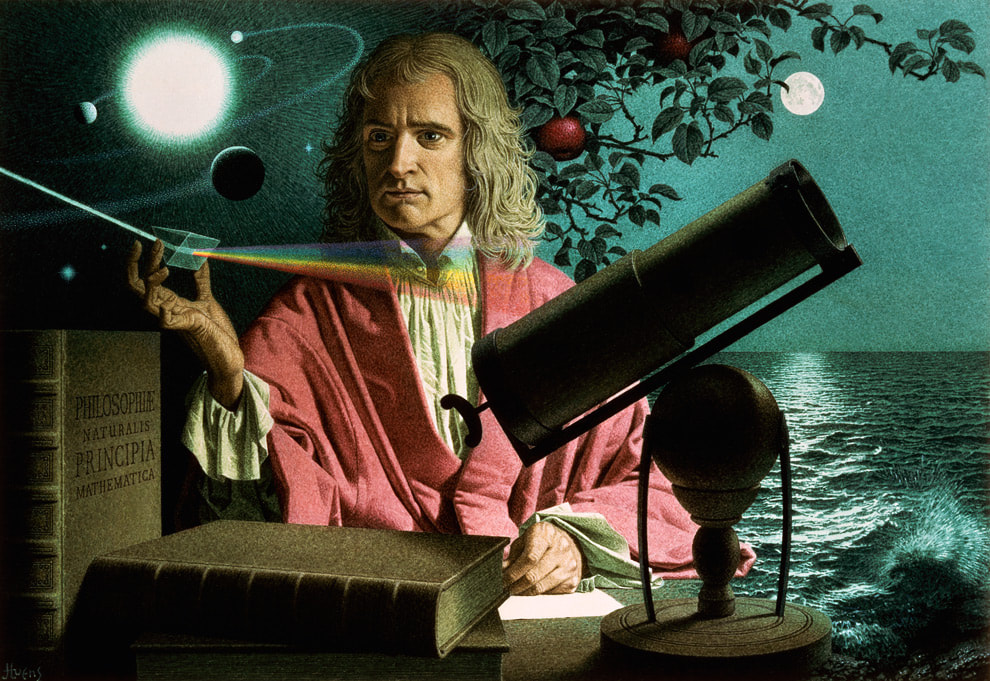

Graham writes … Isaac Newton was the first to really appreciate the concept that mathematics has the power to reveal deep truths about the physical reality in which we live. Combining his laws of motion and gravity, he was able to construct equations which he could solve having also developed the basic rules of what we now call calculus (1). A remarkable individual achievement, which unified a number of different phenomena – for example, the fall of an apple, the motion of the moon, the dynamics of the solar system – under the umbrella of one theory.  Unification of gravity and the quantum still evades resolution. Credit SLAC National Accelerator Lab Unification of gravity and the quantum still evades resolution. Credit SLAC National Accelerator Lab Coming up to the present day, this pursuit of unification continues. In summary, the objective now is to find a theory of everything (TOE), which is something that has occupied the physics community since the development of the quantum and relativity theories in the early 20th century. Of course, the task now is somewhat more challenging than that of Newton. He had ‘simply’ gravity to contend with, whereas now, physicists have so far discovered four fundamental forces – gravity, electromagnetism, the weak and strong nuclear forces – which govern the way the world works. Along the way in all this, we have accumulated a significant understanding of the Universe, both on a macroscopic scale (astrophysics, cosmology) and on a micro scale (quantum physics).  Why do the laws of physics appear to be 'bio-friendly'? Credit: Source unknown. Why do the laws of physics appear to be 'bio-friendly'? Credit: Source unknown. One surprising consequence of all this, is that we have discovered that the Universe is a very unlikely place. So, what do I mean by this? The natural laws of physics and the values of the many fundamental constants that specify how these laws work appear to be tuned so that the Universe is bio-friendly. In other words, if we change the value of just one of the constants by a small amount, something invariably goes wrong, and the resulting universe is devoid of life. The extraordinary thing about this tuning process is just how finely-tuned it is. American physicist Lee Smolin (2) claims to have quantitatively determined the degree to which the cosmos is finely tuned when he says: “One can estimate the probability that the constants in our standard theories of the elementary particles and cosmology would, were they chosen randomly, lead to a world with carbon chemistry. That probability is less than one part in 10 to the power 220.” The reason why this is so significant for me, is that this characteristic of the Universe started me on a personal journey to faith some 20 years ago. Clearly, in itself, the fine-tuning argument does not prove that there is (or is not) a God, but for me it was a strong pointer to the idea that there may be a guiding intelligence behind it all. At that time, I had no religious propensities at all and the idea of a creator God was anathema to me, but even I could appreciate the significance of the argument without the help of Lee Smolin’s mysterious calculations. This was just the beginning of a journey for me, which ultimately led to a spiritual encounter. At the time, this was very unwelcome, as I had always believed that the only reality was physical. However, God had other ideas, and a belief in a spiritual realm has changed my life. However, that is another story, which I tell in some detail in the book if you are interested (3). The purpose of this blog post is to pose a question. When we look at the world around us, and at the Universe at macro and micro scales, we can ask: did it all happen by blind chance, or is there a guiding hand – a source of information and intelligence (“the Word”) – behind it all? There are a number of thought-provoking and intriguing examples of fine-tuning discussed in the book (3), but here I would like to consider a couple of topics not mentioned there, both of which focus on the micro scale of quantum physics.  If the protons and neutrons in this picture were 10 cm in diameter, then the quarks and electrons would be less than 0.1 mm in size and the entire atom would be about 10 km across. Credit: Source unknown. If the protons and neutrons in this picture were 10 cm in diameter, then the quarks and electrons would be less than 0.1 mm in size and the entire atom would be about 10 km across. Credit: Source unknown.

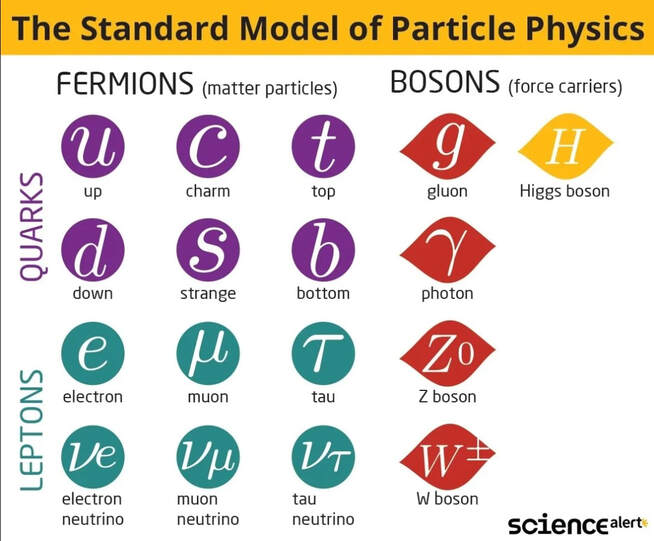

There is general agreement among physicists that something extraordinary occurred then, which in the standard model of cosmology is called the ‘Big Bang’ (BB). There is debate as to whether this was the beginning of our Universe, when space, time, matter and energy, came into existence. Some punters have proposed other scenarios; that perhaps the BB marked the end of one universe and the beginning of another (ours), or that perhaps the ‘seed’ of our Universe had existed in a long-lived quiescent state until some quantum fluctuation had kicked off a powerful expansion – the possibilities are endless. But one thing we do know about 13.8 billion years ago is that the Universe then was very much smaller than it is now, unimaginably dense and ultra-hot. The evidence for this is incontrovertible, in the form of detailed observations of the cosmic microwave background (4). If we adopt the standard model, the events at time zero are still a mystery as we do not have a TOE to say anything about them. However, within a billionth of a billionth of a billionth of second after the BB, repulsive gravity stretched a tiny nugget of space-time by a huge factor – perhaps 10 to the power 30. This period of inflation (5) however was unstable, and lasted only a similarly-fleeting period of time. The energy of the field that created the expansion was dumped into the expanding space and transformed (through the mass-energy equivalence) into a soup of matter particles. It is noteworthy that we are not sure what kind of particles they were, but we do know that, at this stage of the process, they were not the ‘familiar’ ones that make up the atoms in our body. After a period of a few minutes, during which a cascade of rapid particle interactions took place throughout the embryonic cosmos, a population of protons, neutrons and electrons emerged. In these early minutes of the universe, the energy of electromagnetic radiation dominated the interactions and the expansion dynamics, disrupting the assembly of atoms. However, thereafter, there was a brief window of opportunity when the Universe was cool enough for this disruption to cease, but still hot enough for nuclear reactions to take place. During this interval, a population of about 76% hydrogen and 24% helium resulted, with a smattering of lithium (6). In all this, an important feature is the formation of stable proton and neutron particles, without which, of course, there would be no prospect of the development of stars, galaxies and, ultimately, us. To ‘manufacture’ a proton, for example, you need two ‘up’ quarks and one ‘down’ quark (to give a positive electric charge), stably and precisely confined within a tiny volume of space by a system of eight gluons. Without dwelling on the details, the gluons are the strong force carriers which operate between the quarks using a system of three different types of force (arbitrarily labelled by three colours). Far from being a fundamental particle, the proton is comprised of 11 separate particles. The association of quarks and gluons is so stable (and complex) that quarks are never observed in isolation. Similarly, neutrons comprise 11 particles with similar characteristics, apart from there being one ‘up’ quark and two ‘down’ quarks, to ensure a zero electric charge. So what are we to say about all this? Is it likely that all this came about by blind chance? Clearly, the processes I have described is governed by complex rules – the laws of nature – to produce the Universe that we observe. So, in some sense the laws were already ‘imprinted’ on the fabric of space-time at or near time zero. But in the extremely brief fractions of a second after the BB where did they come from? Who or what is the law giver? Rhetorical questions all, but there are a lot of such questions in this post to highlight the notion that such complex behaviour (order) is unlikely to occur simply by ‘blind chance’.

There have been many ‘eureca moments’ in physics, when suddenly things fall into place and order is revealed. One such situation arose in the 1950s, when the increasing power of particle colliders was generating discoveries of many new particles. So many in fact that the physics community was running out of labels for the members of this growing population. Then in 1961, a physicist named Murray Gell-Mann came up with a scheme based upon a branch of mathematics called group theory which made sense of the apparent chaos. His insight was that all the newly discovered entities were made of a few smaller, fundamental particles that he called ‘quarks’. As we have seen above, protons and neutrons comprise three quarks, and mesons, for example, are made up of two. Over time this has evolved into something we now refer to as the standard model of particle physics, which consists of 6 quarks, 6 leptons, 4 force carrier particles and the Higgs boson, as can be seen in the diagram (the model also includes each particle’s corresponding antiparticle). These particles are considered to be fundamental – that is, they are indivisible. We have talked about this in a couple of previous blog posts – in October 2021 and March 2022 – and it might be worth having a look back at these to refresh your memory. Also, as we have seen before, we know that the standard model is not perfect or complete. Gravity’s force carrier, the graviton, is missing, as are any potential particles associated with the mysterious dark universe – dark matter and dark energy. Furthermore, there is an indication of missing constituents in the model, highlighted by the recent anomalous experimental results describing the muon’s magnetic moment (see March 2022 post). I have recently been reading a book (7) by physicist and author Paul Davies, and, although his writings are purely secular, I think my desire to write on today’s topic has been inspired by Davies’s thoughts on many philosophical conundrums found there. The fact that the two examples I have discussed above have an underlying mathematical structure that we can comprehend is striking. Furthermore, there are many profound examples of this structure leading to successful predictions of physical phenomena, such as antimatter (1932), the Higgs Boson (2012) and gravitational waves (2015). Davies expresses a view that if we can extract sense from nature, then it must be that nature itself is imbued with ‘sense’ in some way. There is no reason why nature should display this mathematical structure, and certainly no reason why we should be able to comprehend it. Would processes that arose by ‘blind chance’ be underpinned by a mathematical, predictive structure? – I’m afraid, another question left hanging in the air! I leave the last sentiments to Paul Davies, as I cannot express them in any better way. “How has this come about? How have human beings become privy to nature’s subtle and elegant scheme? Somehow the Universe has engineered, not just its own awareness, but its own comprehension. Mindless, blundering atoms have conspired to spawn beings who are able not merely to watch the show, but to unravel the plot, to engage with the totality of the cosmos and the silent mathematical tune to which it dances.” Graham Swinerd Southampton March 2023 (1) Graham Swinerd and John Bryant, From the Big Bang to Biology: where is God?, Kindle Direct Publishing, 2020, Chapter 2. (2) Lee Smolin, Three Roads to Quantum Gravity, Basics Books, 2001, p. 201. (3) Graham Swinerd and John Bryant, From the Big Bang to Biology: where is God?, Kindle Direct Publishing, 2020, Chapter 4. (4) Ibid., pp. 60-62. (5) Ibid., pp. 67-71. (6) Ibid., primordial nucleosynthesis, p.62. (7) Paul Davies, What’s Eating the Universe? and other cosmic questions, Penguin Books, 2021, p. 158.

0 Comments

Leave a Reply. |

AuthorsJohn Bryant and Graham Swinerd comment on biology, physics and faith. Archives

July 2024

Categories |

RSS Feed

RSS Feed